Understanding

AI Governance

AI Governance — Module 1 of 4

Click to start

Learning objectives

“As someone new to AI governance, I want to understand key concepts, how AI governance differs from model risk management, and how ValidMind supports governance workflows.”

This first module is part of a four-part series:

AI Governance

Module 1 — Contents

Training is interactive — you explore ValidMind live. Try it!

→ , ↓ , SPACE , N — next slide ← , ↑ , P , H — previous slide ? — all keyboard shortcuts

What is AI governance?

Defining AI governance

AI governance is the organizational framework for directing and overseeing how AI is designed, deployed, and used. It sets:

Policy and standards

Accountability and decision rights

Lifecycle controls

Ongoing oversight

Unit of management

In AI governance, the primary unit of management is the AI system or AI use case — not the individual model.

Focuses on:

- How AI is used

- Impact on stakeholders

- Organizational accountability

Applies to:

- Model-based AI

- Non-model AI systems

- Automated decision systems

AI governance vs MRM

Parallel use cases

AI governance and model risk management (MRM) are parallel use cases — not subsets of each other.

| Aspect | AI Governance | MRM |

|---|---|---|

| Unit of management | AI system / use case | Model |

| Objective | Organizational oversight | Technical risk control |

| Scope | Broad — ethics, compliance | Narrow — performance, validation |

Relationship

Key terminology

AI governance terms

Units of oversight:

- AI system

- AI application

- AI use case

- Automated decision system

Risk framing:

- AI risk

- Use case risk

- Impact / harm

- Ethical risk

Classification and lifecycle

Classification:

- Risk tier

- Impact level

- Criticality

- Prohibited / high-risk / limited-risk

Lifecycle:

- Intake

- Approval

- Deployment

- Human oversight

- Retirement

Platform orientation

ValidMind for AI governance

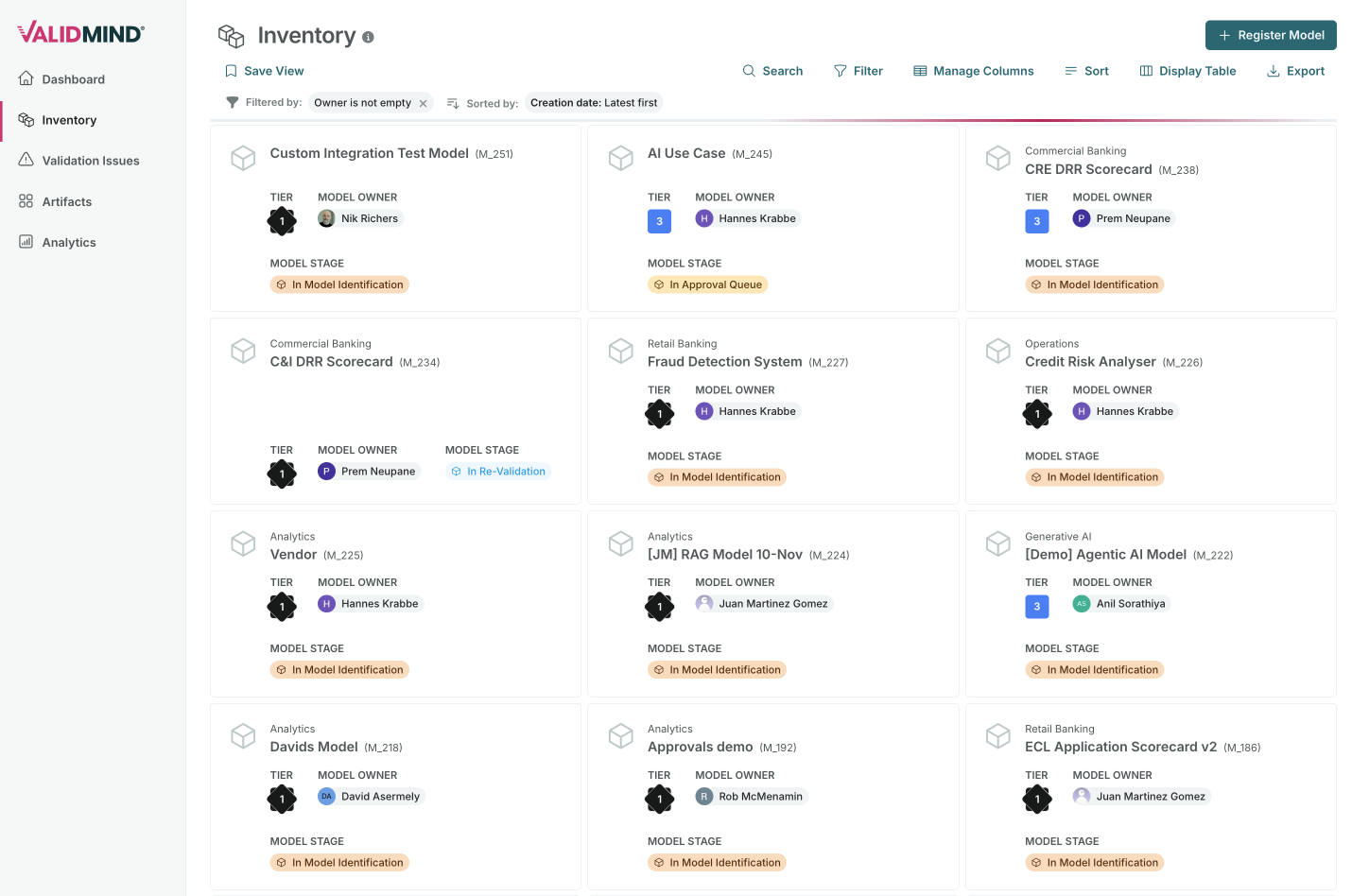

ValidMind supports AI governance through:

- Inventory — Track AI systems use cases, owners, stakeholders, and more

- Custom fields — Configure risk tiers, impact levels, and more

- Workflows — Intake, approval, and lifecycle processes

- Documentation — Run testing and generate documentation

- Validation - Identify and track issues

- Dashboards — Monitor compliance

Next steps

Continue to Module 2 to learn about managing AI use cases in ValidMind.

ValidMind Academy | Home