%pip install -q validmindNote: you may need to restart the kernel to use updated packages.Learn how to use ValidMind for your end-to-end model validation process based on common scenarios with our series of four introductory notebooks. In this first notebook, set up the ValidMind Library in preparation for validating a champion model.

These notebooks use a binary classification model as an example, but the same principles shown here apply to other model types.

Model validation aims to independently assess the compliance of champion models created by model developers with regulatory guidance by conducting thorough testing and analysis, potentially including the use of challenger models to benchmark performance. Assessments, presented in the form of a validation report, typically include artifacts (findings) and recommendations to address those issues.

A binary classification model is a type of predictive model used in churn analysis to identify customers who are likely to leave a service or subscription by analyzing various behavioral, transactional, and demographic factors.

ValidMind is a suite of tools for managing model risk, including risk associated with AI and statistical models.

You use the ValidMind Library to automate comparison and other validation tests, and then use the ValidMind Platform to submit compliance assessments of champion models via comprehensive validation reports. Together, these products simplify model risk management, facilitate compliance with regulations and institutional standards, and enhance collaboration between yourself and model developers.

This notebook assumes you have basic familiarity with Python, including an understanding of how functions work. If you are new to Python, you can still run the notebook but we recommend further familiarizing yourself with the language.

If you encounter errors due to missing modules in your Python environment, install the modules with pip install, and then re-run the notebook. For more help, refer to Installing Python Modules.

If you haven't already seen our documentation on the ValidMind Library, we recommend you begin by exploring the available resources in this section. There, you can learn more about documenting models and running tests, as well as find code samples and our Python Library API reference.

Validation report: A comprehensive and structured assessment of a model’s development and performance, focusing on verifying its integrity, appropriateness, and alignment with its intended use. It includes analyses of model assumptions, data quality, performance metrics, outcomes of testing procedures, and risk considerations. The validation report supports transparency, regulatory compliance, and informed decision-making by documenting the validator’s independent review and conclusions.

Validation report template: Serves as a standardized framework for conducting and documenting model validation activities. It outlines the required sections, recommended analyses, and expected validation tests, ensuring consistency and completeness across validation reports. The template helps guide validators through a systematic review process while promoting comparability and traceability of validation outcomes.

Tests: A function contained in the ValidMind Library, designed to run a specific quantitative test on the dataset or model. Tests are the building blocks of ValidMind, used to evaluate and document models and datasets.

Metrics: A subset of tests that do not have thresholds. In the context of this notebook, metrics and tests can be thought of as interchangeable concepts.

Custom metrics: Custom metrics are functions that you define to evaluate your model or dataset. These functions can be registered with the ValidMind Library to be used in the ValidMind Platform.

Inputs: Objects to be evaluated and documented in the ValidMind Library. They can be any of the following:

vm.init_model().vm.init_dataset().Parameters: Additional arguments that can be passed when running a ValidMind test, used to pass additional information to a metric, customize its behavior, or provide additional context.

Outputs: Custom metrics can return elements like tables or plots. Tables may be a list of dictionaries (each representing a row) or a pandas DataFrame. Plots may be matplotlib or plotly figures.

In a usual model lifecycle, a champion model will have been independently registered in your model inventory and submitted to you for validation by your model development team as part of the effective challenge process. (Learn more: Submit for approval)

For this notebook, we'll have you register a dummy model in the ValidMind Platform inventory and assign yourself as the validator to familiarize you with the ValidMind interface and circumvent the need for an existing model:

In a browser, log in to ValidMind.

In the left sidebar, navigate to Inventory and click + Register Model.

Enter the model details and click Next > to continue to assignment of model stakeholders. (Need more help?)

Select your own name under the MODEL OWNER drop-down — don’t worry, we’ll adjust these permissions next for validation.

Click Register Model to add the model to your inventory.

In order to log tests as a validator instead of as a developer, on the model details page that appears after you've successfully registered your sample model:

Remove yourself as a model owner:

Remove yourself as a developer:

Add yourself as a validator:

Once you've registered your model, let's select a documentation template. A template predefines sections for your model documentation and provides a general outline to follow, making the documentation process much easier for developers.

We'll need this documentation template later for reference as we draft our validation report:

In the left sidebar that appears for your model, click Documents and select Documentation.

Under TEMPLATE, select Binary classification.

Click Use Template to apply the template.

Next, let's select a validation report template. A template predefines sections for your report and provides a general outline to follow, making the validation process much easier.

In the left sidebar that appears for your model, click Documents and select Validation.

Under TEMPLATE, select Generic Validation Report.

Click Use Template to apply the template.

To install the library:

%pip install -q validmindNote: you may need to restart the kernel to use updated packages.Initialize the ValidMind Library with the code snippet unique to each model per document, ensuring your test results are uploaded to the correct model and automatically populated in the right document in the ValidMind Platform when you run this notebook.

Validation from the DOCUMENT drop-down menu..env file or replace the placeholder with your own code snippet:# Load your model identifier credentials from an `.env` file

%load_ext dotenv

%dotenv .env

# Or replace with your code snippet

import validmind as vm

vm.init(

# api_host="...",

# api_key="...",

# api_secret="...",

# model="...",

document="validation-report",

)2026-04-07 23:07:46,478 - INFO(validmind.api_client): 🎉 Connected to ValidMind!

📊 Model: [ValidMind Academy] Model validation (ID: cmalguc9y02ok199q2db381ib)

📁 Document Type: validation_reportLet's verify that you have connected the ValidMind Library to the ValidMind Platform and that the appropriate template is selected for your model.

You will attach evidence to this template in the form of risk assessment notes, artifacts, and test results later on. For now, take a look at the default structure that the template provides with the vm.preview_template() function from the ValidMind library:

vm.preview_template()Next, let's head to the ValidMind Platform to see the template in action:

In a browser, log in to ValidMind.

In the left sidebar, navigate to Inventory and select the model you registered for this "ValidMind for model validation" series of notebooks.

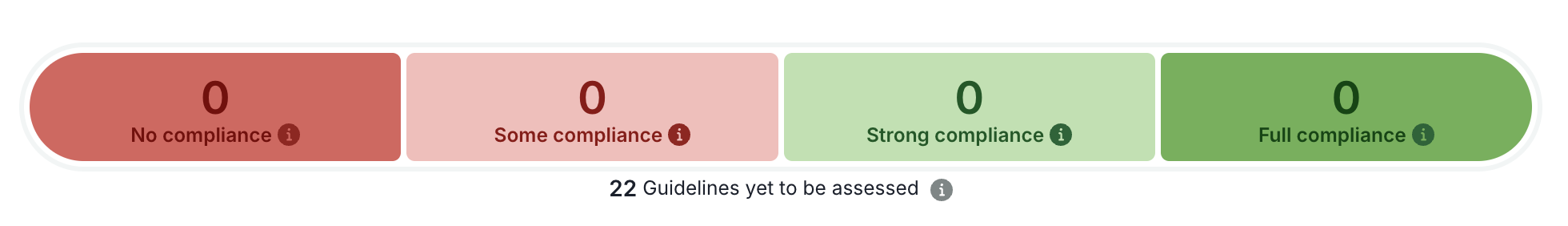

Click Validation under Documents for your model and note:

Next, let's explore the list of all available tests in the ValidMind Library with the vm.tests.list_tests() function — we'll later narrow down the tests we want to run from this list when we learn to run tests.

vm.tests.list_tests()| ID | Name | Description | Has Figure | Has Table | Required Inputs | Params | Tags | Tasks |

|---|---|---|---|---|---|---|---|---|

| validmind.data_validation.ACFandPACFPlot | AC Fand PACF Plot | Analyzes time series data using Autocorrelation Function (ACF) and Partial Autocorrelation Function (PACF) plots to... | True | False | ['dataset'] | {} | ['time_series_data', 'forecasting', 'statistical_test', 'visualization'] | ['regression'] |

| validmind.data_validation.ADF | ADF | Assesses the stationarity of a time series dataset using the Augmented Dickey-Fuller (ADF) test.... | False | True | ['dataset'] | {} | ['time_series_data', 'statsmodels', 'forecasting', 'statistical_test', 'stationarity'] | ['regression'] |

| validmind.data_validation.AutoAR | Auto AR | Automatically identifies the optimal Autoregressive (AR) order for a time series using BIC and AIC criteria.... | False | True | ['dataset'] | {'max_ar_order': {'type': 'int', 'default': 3}} | ['time_series_data', 'statsmodels', 'forecasting', 'statistical_test'] | ['regression'] |

| validmind.data_validation.AutoMA | Auto MA | Automatically selects the optimal Moving Average (MA) order for each variable in a time series dataset based on... | False | True | ['dataset'] | {'max_ma_order': {'type': 'int', 'default': 3}} | ['time_series_data', 'statsmodels', 'forecasting', 'statistical_test'] | ['regression'] |

| validmind.data_validation.AutoStationarity | Auto Stationarity | Automates Augmented Dickey-Fuller test to assess stationarity across multiple time series in a DataFrame.... | False | True | ['dataset'] | {'max_order': {'type': 'int', 'default': 5}, 'threshold': {'type': 'float', 'default': 0.05}} | ['time_series_data', 'statsmodels', 'forecasting', 'statistical_test'] | ['regression'] |

| validmind.data_validation.BivariateScatterPlots | Bivariate Scatter Plots | Generates bivariate scatterplots to visually inspect relationships between pairs of numerical predictor variables... | True | False | ['dataset'] | {} | ['tabular_data', 'numerical_data', 'visualization'] | ['classification'] |

| validmind.data_validation.BoxPierce | Box Pierce | Detects autocorrelation in time-series data through the Box-Pierce test to validate model performance.... | False | True | ['dataset'] | {} | ['time_series_data', 'forecasting', 'statistical_test', 'statsmodels'] | ['regression'] |

| validmind.data_validation.ChiSquaredFeaturesTable | Chi Squared Features Table | Assesses the statistical association between categorical features and a target variable using the Chi-Squared test.... | False | True | ['dataset'] | {'p_threshold': {'type': '_empty', 'default': 0.05}} | ['tabular_data', 'categorical_data', 'statistical_test'] | ['classification'] |

| validmind.data_validation.ClassImbalance | Class Imbalance | Evaluates and quantifies class distribution imbalance in a dataset used by a machine learning model.... | True | True | ['dataset'] | {'min_percent_threshold': {'type': 'int', 'default': 10}} | ['tabular_data', 'binary_classification', 'multiclass_classification', 'data_quality'] | ['classification'] |

| validmind.data_validation.DatasetDescription | Dataset Description | Provides comprehensive analysis and statistical summaries of each column in a machine learning model's dataset.... | False | True | ['dataset'] | {} | ['tabular_data', 'time_series_data', 'text_data'] | ['classification', 'regression', 'text_classification', 'text_summarization'] |

| validmind.data_validation.DatasetSplit | Dataset Split | Evaluates and visualizes the distribution proportions among training, testing, and validation datasets of an ML... | False | True | ['datasets'] | {} | ['tabular_data', 'time_series_data', 'text_data'] | ['classification', 'regression', 'text_classification', 'text_summarization'] |

| validmind.data_validation.DescriptiveStatistics | Descriptive Statistics | Performs a detailed descriptive statistical analysis of both numerical and categorical data within a model's... | False | True | ['dataset'] | {} | ['tabular_data', 'time_series_data', 'data_quality'] | ['classification', 'regression'] |

| validmind.data_validation.DickeyFullerGLS | Dickey Fuller GLS | Assesses stationarity in time series data using the Dickey-Fuller GLS test to determine the order of integration.... | False | True | ['dataset'] | {} | ['time_series_data', 'forecasting', 'unit_root_test'] | ['regression'] |

| validmind.data_validation.Duplicates | Duplicates | Tests dataset for duplicate entries, ensuring model reliability via data quality verification.... | False | True | ['dataset'] | {'min_threshold': {'type': '_empty', 'default': 1}} | ['tabular_data', 'data_quality', 'text_data'] | ['classification', 'regression'] |

| validmind.data_validation.EngleGrangerCoint | Engle Granger Coint | Assesses the degree of co-movement between pairs of time series data using the Engle-Granger cointegration test.... | False | True | ['dataset'] | {'threshold': {'type': 'float', 'default': 0.05}} | ['time_series_data', 'statistical_test', 'forecasting'] | ['regression'] |

| validmind.data_validation.FeatureTargetCorrelationPlot | Feature Target Correlation Plot | Visualizes the correlation between input features and the model's target output in a color-coded horizontal bar... | True | False | ['dataset'] | {'fig_height': {'type': '_empty', 'default': 600}} | ['tabular_data', 'visualization', 'correlation'] | ['classification', 'regression'] |

| validmind.data_validation.HighCardinality | High Cardinality | Assesses the number of unique values in categorical columns to detect high cardinality and potential overfitting.... | False | True | ['dataset'] | {'num_threshold': {'type': 'int', 'default': 100}, 'percent_threshold': {'type': 'float', 'default': 0.1}, 'threshold_type': {'type': 'str', 'default': 'percent'}} | ['tabular_data', 'data_quality', 'categorical_data'] | ['classification', 'regression'] |

| validmind.data_validation.HighPearsonCorrelation | High Pearson Correlation | Identifies highly correlated feature pairs in a dataset suggesting feature redundancy or multicollinearity.... | False | True | ['dataset'] | {'max_threshold': {'type': 'float', 'default': 0.3}, 'top_n_correlations': {'type': 'int', 'default': 10}, 'feature_columns': {'type': 'list', 'default': None}} | ['tabular_data', 'data_quality', 'correlation'] | ['classification', 'regression'] |

| validmind.data_validation.IQROutliersBarPlot | IQR Outliers Bar Plot | Visualizes outlier distribution across percentiles in numerical data using the Interquartile Range (IQR) method.... | True | False | ['dataset'] | {'threshold': {'type': 'float', 'default': 1.5}, 'fig_width': {'type': 'int', 'default': 800}} | ['tabular_data', 'visualization', 'numerical_data'] | ['classification', 'regression'] |

| validmind.data_validation.IQROutliersTable | IQR Outliers Table | Determines and summarizes outliers in numerical features using the Interquartile Range method.... | False | True | ['dataset'] | {'threshold': {'type': 'float', 'default': 1.5}} | ['tabular_data', 'numerical_data'] | ['classification', 'regression'] |

| validmind.data_validation.IsolationForestOutliers | Isolation Forest Outliers | Detects outliers in a dataset using the Isolation Forest algorithm and visualizes results through scatter plots.... | True | False | ['dataset'] | {'random_state': {'type': 'int', 'default': 0}, 'contamination': {'type': 'float', 'default': 0.1}, 'feature_columns': {'type': 'list', 'default': None}} | ['tabular_data', 'anomaly_detection'] | ['classification'] |

| validmind.data_validation.JarqueBera | Jarque Bera | Assesses normality of dataset features in an ML model using the Jarque-Bera test.... | False | True | ['dataset'] | {} | ['tabular_data', 'data_distribution', 'statistical_test', 'statsmodels'] | ['classification', 'regression'] |

| validmind.data_validation.KPSS | KPSS | Assesses the stationarity of time-series data in a machine learning model using the KPSS unit root test.... | False | True | ['dataset'] | {} | ['time_series_data', 'stationarity', 'unit_root_test', 'statsmodels'] | ['data_validation'] |

| validmind.data_validation.LJungBox | L Jung Box | Assesses autocorrelations in dataset features by performing a Ljung-Box test on each feature.... | False | True | ['dataset'] | {} | ['time_series_data', 'forecasting', 'statistical_test', 'statsmodels'] | ['regression'] |

| validmind.data_validation.LaggedCorrelationHeatmap | Lagged Correlation Heatmap | Assesses and visualizes correlation between target variable and lagged independent variables in a time-series... | True | False | ['dataset'] | {'num_lags': {'type': 'int', 'default': 10}} | ['time_series_data', 'visualization'] | ['regression'] |

| validmind.data_validation.MissingValues | Missing Values | Evaluates dataset quality by ensuring missing value percentage across all features does not exceed a set threshold.... | False | True | ['dataset'] | {'min_percentage_threshold': {'type': 'float', 'default': 1.0}} | ['tabular_data', 'data_quality'] | ['classification', 'regression'] |

| validmind.data_validation.MissingValuesBarPlot | Missing Values Bar Plot | Assesses the percentage and distribution of missing values in the dataset via a bar plot, with emphasis on... | True | False | ['dataset'] | {'threshold': {'type': 'int', 'default': 80}, 'fig_height': {'type': 'int', 'default': 600}} | ['tabular_data', 'data_quality', 'visualization'] | ['classification', 'regression'] |

| validmind.data_validation.MutualInformation | Mutual Information | Calculates mutual information scores between features and target variable to evaluate feature relevance.... | True | False | ['dataset'] | {'min_threshold': {'type': 'float', 'default': 0.01}, 'task': {'type': 'str', 'default': 'classification'}} | ['feature_selection', 'data_analysis'] | ['classification', 'regression'] |

| validmind.data_validation.PearsonCorrelationMatrix | Pearson Correlation Matrix | Evaluates linear dependency between numerical variables in a dataset via a Pearson Correlation coefficient heat map.... | True | False | ['dataset'] | {} | ['tabular_data', 'numerical_data', 'correlation'] | ['classification', 'regression'] |

| validmind.data_validation.PhillipsPerronArch | Phillips Perron Arch | Assesses the stationarity of time series data in each feature of the ML model using the Phillips-Perron test.... | False | True | ['dataset'] | {} | ['time_series_data', 'forecasting', 'statistical_test', 'unit_root_test'] | ['regression'] |

| validmind.data_validation.ProtectedClassesDescription | Protected Classes Description | Visualizes the distribution of protected classes in the dataset relative to the target variable... | True | True | ['dataset'] | {'protected_classes': {'type': '_empty', 'default': None}} | ['bias_and_fairness', 'descriptive_statistics'] | ['classification', 'regression'] |

| validmind.data_validation.RollingStatsPlot | Rolling Stats Plot | Evaluates the stationarity of time series data by plotting its rolling mean and standard deviation over a specified... | True | False | ['dataset'] | {'window_size': {'type': 'int', 'default': 12}} | ['time_series_data', 'visualization', 'stationarity'] | ['regression'] |

| validmind.data_validation.RunsTest | Runs Test | Executes Runs Test on ML model to detect non-random patterns in output data sequence.... | False | True | ['dataset'] | {} | ['tabular_data', 'statistical_test', 'statsmodels'] | ['classification', 'regression'] |

| validmind.data_validation.ScatterPlot | Scatter Plot | Assesses visual relationships, patterns, and outliers among features in a dataset through scatter plot matrices.... | True | False | ['dataset'] | {} | ['tabular_data', 'visualization'] | ['classification', 'regression'] |

| validmind.data_validation.ScoreBandDefaultRates | Score Band Default Rates | Analyzes default rates and population distribution across credit score bands.... | False | True | ['dataset', 'model'] | {'score_column': {'type': 'str', 'default': 'score'}, 'score_bands': {'type': 'list', 'default': None}} | ['visualization', 'credit_risk', 'scorecard'] | ['classification'] |

| validmind.data_validation.SeasonalDecompose | Seasonal Decompose | Assesses patterns and seasonality in a time series dataset by decomposing its features into foundational components.... | True | False | ['dataset'] | {'seasonal_model': {'type': 'str', 'default': 'additive'}} | ['time_series_data', 'seasonality', 'statsmodels'] | ['regression'] |

| validmind.data_validation.ShapiroWilk | Shapiro Wilk | Evaluates feature-wise normality of training data using the Shapiro-Wilk test.... | False | True | ['dataset'] | {} | ['tabular_data', 'data_distribution', 'statistical_test'] | ['classification', 'regression'] |

| validmind.data_validation.Skewness | Skewness | Evaluates the skewness of numerical data in a dataset to check against a defined threshold, aiming to ensure data... | False | True | ['dataset'] | {'max_threshold': {'type': '_empty', 'default': 1}} | ['data_quality', 'tabular_data'] | ['classification', 'regression'] |

| validmind.data_validation.SpreadPlot | Spread Plot | Assesses potential correlations between pairs of time series variables through visualization to enhance... | True | False | ['dataset'] | {} | ['time_series_data', 'visualization'] | ['regression'] |

| validmind.data_validation.TabularCategoricalBarPlots | Tabular Categorical Bar Plots | Generates and visualizes bar plots for each category in categorical features to evaluate the dataset's composition.... | True | False | ['dataset'] | {} | ['tabular_data', 'visualization'] | ['classification', 'regression'] |

| validmind.data_validation.TabularDateTimeHistograms | Tabular Date Time Histograms | Generates histograms to provide graphical insight into the distribution of time intervals in a model's datetime... | True | False | ['dataset'] | {} | ['time_series_data', 'visualization'] | ['classification', 'regression'] |

| validmind.data_validation.TabularDescriptionTables | Tabular Description Tables | Summarizes key descriptive statistics for numerical, categorical, and datetime variables in a dataset.... | False | True | ['dataset'] | {} | ['tabular_data'] | ['classification', 'regression'] |

| validmind.data_validation.TabularNumericalHistograms | Tabular Numerical Histograms | Generates histograms for each numerical feature in a dataset to provide visual insights into data distribution and... | True | False | ['dataset'] | {} | ['tabular_data', 'visualization'] | ['classification', 'regression'] |

| validmind.data_validation.TargetRateBarPlots | Target Rate Bar Plots | Generates bar plots visualizing the default rates of categorical features for a classification machine learning... | True | False | ['dataset'] | {} | ['tabular_data', 'visualization', 'categorical_data'] | ['classification'] |

| validmind.data_validation.TimeSeriesDescription | Time Series Description | Generates a detailed analysis for the provided time series dataset, summarizing key statistics to identify trends,... | False | True | ['dataset'] | {} | ['time_series_data', 'analysis'] | ['regression'] |

| validmind.data_validation.TimeSeriesDescriptiveStatistics | Time Series Descriptive Statistics | Evaluates the descriptive statistics of a time series dataset to identify trends, patterns, and data quality issues.... | False | True | ['dataset'] | {} | ['time_series_data', 'analysis'] | ['regression'] |

| validmind.data_validation.TimeSeriesFrequency | Time Series Frequency | Evaluates consistency of time series data frequency and generates a frequency plot.... | True | True | ['dataset'] | {} | ['time_series_data'] | ['regression'] |

| validmind.data_validation.TimeSeriesHistogram | Time Series Histogram | Visualizes distribution of time-series data using histograms and Kernel Density Estimation (KDE) lines.... | True | False | ['dataset'] | {'nbins': {'type': '_empty', 'default': 30}} | ['data_validation', 'visualization', 'time_series_data'] | ['regression', 'time_series_forecasting'] |

| validmind.data_validation.TimeSeriesLinePlot | Time Series Line Plot | Generates and analyses time-series data through line plots revealing trends, patterns, anomalies over time.... | True | False | ['dataset'] | {} | ['time_series_data', 'visualization'] | ['regression'] |

| validmind.data_validation.TimeSeriesMissingValues | Time Series Missing Values | Validates time-series data quality by confirming the count of missing values is below a certain threshold.... | True | True | ['dataset'] | {'min_threshold': {'type': 'int', 'default': 1}} | ['time_series_data'] | ['regression'] |

| validmind.data_validation.TimeSeriesOutliers | Time Series Outliers | Identifies and visualizes outliers in time-series data using the z-score method.... | False | True | ['dataset'] | {'zscore_threshold': {'type': 'int', 'default': 3}} | ['time_series_data'] | ['regression'] |

| validmind.data_validation.TooManyZeroValues | Too Many Zero Values | Identifies numerical columns in a dataset that contain an excessive number of zero values, defined by a threshold... | False | True | ['dataset'] | {'max_percent_threshold': {'type': 'float', 'default': 0.03}} | ['tabular_data'] | ['regression', 'classification'] |

| validmind.data_validation.UniqueRows | Unique Rows | Verifies the diversity of the dataset by ensuring that the count of unique rows exceeds a prescribed threshold.... | False | True | ['dataset'] | {'min_percent_threshold': {'type': 'float', 'default': 1}} | ['tabular_data'] | ['regression', 'classification'] |

| validmind.data_validation.WOEBinPlots | WOE Bin Plots | Generates visualizations of Weight of Evidence (WoE) and Information Value (IV) for understanding predictive power... | True | False | ['dataset'] | {'breaks_adj': {'type': 'list', 'default': None}, 'fig_height': {'type': 'int', 'default': 600}, 'fig_width': {'type': 'int', 'default': 500}} | ['tabular_data', 'visualization', 'categorical_data'] | ['classification'] |

| validmind.data_validation.WOEBinTable | WOE Bin Table | Assesses the Weight of Evidence (WoE) and Information Value (IV) of each feature to evaluate its predictive power... | False | True | ['dataset'] | {'breaks_adj': {'type': 'list', 'default': None}} | ['tabular_data', 'categorical_data'] | ['classification'] |

| validmind.data_validation.ZivotAndrewsArch | Zivot Andrews Arch | Evaluates the order of integration and stationarity of time series data using the Zivot-Andrews unit root test.... | False | True | ['dataset'] | {} | ['time_series_data', 'stationarity', 'unit_root_test'] | ['regression'] |

| validmind.data_validation.nlp.CommonWords | Common Words | Assesses the most frequent non-stopwords in a text column for identifying prevalent language patterns.... | True | False | ['dataset'] | {} | ['nlp', 'text_data', 'visualization', 'frequency_analysis'] | ['text_classification', 'text_summarization'] |

| validmind.data_validation.nlp.Hashtags | Hashtags | Assesses hashtag frequency in a text column, highlighting usage trends and potential dataset bias or spam.... | True | False | ['dataset'] | {'top_hashtags': {'type': 'int', 'default': 25}} | ['nlp', 'text_data', 'visualization', 'frequency_analysis'] | ['text_classification', 'text_summarization'] |

| validmind.data_validation.nlp.LanguageDetection | Language Detection | Assesses the diversity of languages in a textual dataset by detecting and visualizing the distribution of languages.... | True | False | ['dataset'] | {} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.data_validation.nlp.Mentions | Mentions | Calculates and visualizes frequencies of '@' prefixed mentions in a text-based dataset for NLP model analysis.... | True | False | ['dataset'] | {'top_mentions': {'type': 'int', 'default': 25}} | ['nlp', 'text_data', 'visualization', 'frequency_analysis'] | ['text_classification', 'text_summarization'] |

| validmind.data_validation.nlp.PolarityAndSubjectivity | Polarity And Subjectivity | Analyzes the polarity and subjectivity of text data within a given dataset to visualize the sentiment distribution.... | True | True | ['dataset'] | {'threshold_subjectivity': {'type': '_empty', 'default': 0.5}, 'threshold_polarity': {'type': '_empty', 'default': 0}} | ['nlp', 'text_data', 'data_validation'] | ['nlp'] |

| validmind.data_validation.nlp.Punctuations | Punctuations | Analyzes and visualizes the frequency distribution of punctuation usage in a given text dataset.... | True | False | ['dataset'] | {'count_mode': {'type': '_empty', 'default': 'token'}} | ['nlp', 'text_data', 'visualization', 'frequency_analysis'] | ['text_classification', 'text_summarization', 'nlp'] |

| validmind.data_validation.nlp.Sentiment | Sentiment | Analyzes the sentiment of text data within a dataset using the VADER sentiment analysis tool.... | True | False | ['dataset'] | {} | ['nlp', 'text_data', 'data_validation'] | ['nlp'] |

| validmind.data_validation.nlp.StopWords | Stop Words | Evaluates and visualizes the frequency of English stop words in a text dataset against a defined threshold.... | True | True | ['dataset'] | {'min_percent_threshold': {'type': 'float', 'default': 0.5}, 'num_words': {'type': 'int', 'default': 25}} | ['nlp', 'text_data', 'frequency_analysis', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.data_validation.nlp.TextDescription | Text Description | Conducts comprehensive textual analysis on a dataset using NLTK to evaluate various parameters and generate... | True | False | ['dataset'] | {'unwanted_tokens': {'type': 'set', 'default': {'us', "s'", 'mr', 'ms', 'dollar', ' ', "''", '``', "'s", 'dr', 's', 'mrs'}}, 'lang': {'type': 'str', 'default': 'english'}} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.data_validation.nlp.Toxicity | Toxicity | Assesses the toxicity of text data within a dataset to visualize the distribution of toxicity scores.... | True | False | ['dataset'] | {} | ['nlp', 'text_data', 'data_validation'] | ['nlp'] |

| validmind.model_validation.BertScore | Bert Score | Assesses the quality of machine-generated text using BERTScore metrics and visualizes results through histograms... | True | True | ['dataset', 'model'] | {'evaluation_model': {'type': '_empty', 'default': 'distilbert-base-uncased'}} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.model_validation.BleuScore | Bleu Score | Evaluates the quality of machine-generated text using BLEU metrics and visualizes the results through histograms... | True | True | ['dataset', 'model'] | {} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.model_validation.ClusterSizeDistribution | Cluster Size Distribution | Assesses the performance of clustering models by comparing the distribution of cluster sizes in model predictions... | True | False | ['dataset', 'model'] | {} | ['sklearn', 'model_performance'] | ['clustering'] |

| validmind.model_validation.ContextualRecall | Contextual Recall | Evaluates a Natural Language Generation model's ability to generate contextually relevant and factually correct... | True | True | ['dataset', 'model'] | {} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.model_validation.FeaturesAUC | Features AUC | Evaluates the discriminatory power of each individual feature within a binary classification model by calculating... | True | False | ['dataset'] | {'fontsize': {'type': 'int', 'default': 12}, 'figure_height': {'type': 'int', 'default': 500}} | ['feature_importance', 'AUC', 'visualization'] | ['classification'] |

| validmind.model_validation.MeteorScore | Meteor Score | Assesses the quality of machine-generated translations by comparing them to human-produced references using the... | True | True | ['dataset', 'model'] | {} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.model_validation.ModelMetadata | Model Metadata | Compare metadata of different models and generate a summary table with the results.... | False | True | ['model'] | {} | ['model_training', 'metadata'] | ['regression', 'time_series_forecasting'] |

| validmind.model_validation.ModelPredictionResiduals | Model Prediction Residuals | Assesses normality and behavior of residuals in regression models through visualization and statistical tests.... | True | True | ['dataset', 'model'] | {'nbins': {'type': 'int', 'default': 100}, 'p_value_threshold': {'type': 'float', 'default': 0.05}, 'start_date': {'type': 'Optional', 'default': None}, 'end_date': {'type': 'Optional', 'default': None}} | ['regression'] | ['residual_analysis', 'visualization'] |

| validmind.model_validation.RegardScore | Regard Score | Assesses the sentiment and potential biases in text generated by NLP models by computing and visualizing regard... | True | True | ['dataset', 'model'] | {} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.model_validation.RegressionResidualsPlot | Regression Residuals Plot | Evaluates regression model performance using residual distribution and actual vs. predicted plots.... | True | False | ['model', 'dataset'] | {'bin_size': {'type': 'float', 'default': 0.1}} | ['model_performance', 'visualization'] | ['regression'] |

| validmind.model_validation.RougeScore | Rouge Score | Assesses the quality of machine-generated text using ROUGE metrics and visualizes the results to provide... | True | True | ['dataset', 'model'] | {'metric': {'type': 'str', 'default': 'rouge-1'}} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.model_validation.TimeSeriesPredictionWithCI | Time Series Prediction With CI | Assesses predictive accuracy and uncertainty in time series models, highlighting breaches beyond confidence... | True | True | ['dataset', 'model'] | {'confidence': {'type': 'float', 'default': 0.95}} | ['model_predictions', 'visualization'] | ['regression', 'time_series_forecasting'] |

| validmind.model_validation.TimeSeriesPredictionsPlot | Time Series Predictions Plot | Plot actual vs predicted values for time series data and generate a visual comparison for the model.... | True | False | ['dataset', 'model'] | {} | ['model_predictions', 'visualization'] | ['regression', 'time_series_forecasting'] |

| validmind.model_validation.TimeSeriesR2SquareBySegments | Time Series R2 Square By Segments | Evaluates the R-Squared values of regression models over specified time segments in time series data to assess... | True | True | ['dataset', 'model'] | {'segments': {'type': 'Optional', 'default': None}} | ['model_performance', 'sklearn'] | ['regression', 'time_series_forecasting'] |

| validmind.model_validation.TokenDisparity | Token Disparity | Evaluates the token disparity between reference and generated texts, visualizing the results through histograms and... | True | True | ['dataset', 'model'] | {} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.model_validation.ToxicityScore | Toxicity Score | Assesses the toxicity levels of texts generated by NLP models to identify and mitigate harmful or offensive content.... | True | True | ['dataset', 'model'] | {} | ['nlp', 'text_data', 'visualization'] | ['text_classification', 'text_summarization'] |

| validmind.model_validation.embeddings.ClusterDistribution | Cluster Distribution | Assesses the distribution of text embeddings across clusters produced by a model using KMeans clustering.... | True | False | ['model', 'dataset'] | {'num_clusters': {'type': 'int', 'default': 5}} | ['llm', 'text_data', 'embeddings', 'visualization'] | ['feature_extraction'] |

| validmind.model_validation.embeddings.CosineSimilarityComparison | Cosine Similarity Comparison | Assesses the similarity between embeddings generated by different models using Cosine Similarity, providing both... | True | True | ['dataset', 'models'] | {} | ['visualization', 'dimensionality_reduction', 'embeddings'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.embeddings.CosineSimilarityDistribution | Cosine Similarity Distribution | Assesses the similarity between predicted text embeddings from a model using a Cosine Similarity distribution... | True | False | ['dataset', 'model'] | {} | ['llm', 'text_data', 'embeddings', 'visualization'] | ['feature_extraction'] |

| validmind.model_validation.embeddings.CosineSimilarityHeatmap | Cosine Similarity Heatmap | Generates an interactive heatmap to visualize the cosine similarities among embeddings derived from a given model.... | True | False | ['dataset', 'model'] | {'title': {'type': '_empty', 'default': 'Cosine Similarity Matrix'}, 'color': {'type': '_empty', 'default': 'Cosine Similarity'}, 'xaxis_title': {'type': '_empty', 'default': 'Index'}, 'yaxis_title': {'type': '_empty', 'default': 'Index'}, 'color_scale': {'type': '_empty', 'default': 'Blues'}} | ['visualization', 'dimensionality_reduction', 'embeddings'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.embeddings.DescriptiveAnalytics | Descriptive Analytics | Evaluates statistical properties of text embeddings in an ML model via mean, median, and standard deviation... | True | False | ['dataset', 'model'] | {} | ['llm', 'text_data', 'embeddings', 'visualization'] | ['feature_extraction'] |

| validmind.model_validation.embeddings.EmbeddingsVisualization2D | Embeddings Visualization2 D | Visualizes 2D representation of text embeddings generated by a model using t-SNE technique.... | True | False | ['dataset', 'model'] | {'cluster_column': {'type': 'Optional', 'default': None}, 'perplexity': {'type': 'int', 'default': 30}} | ['llm', 'text_data', 'embeddings', 'visualization'] | ['feature_extraction'] |

| validmind.model_validation.embeddings.EuclideanDistanceComparison | Euclidean Distance Comparison | Assesses and visualizes the dissimilarity between model embeddings using Euclidean distance, providing insights... | True | True | ['dataset', 'models'] | {} | ['visualization', 'dimensionality_reduction', 'embeddings'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.embeddings.EuclideanDistanceHeatmap | Euclidean Distance Heatmap | Generates an interactive heatmap to visualize the Euclidean distances among embeddings derived from a given model.... | True | False | ['dataset', 'model'] | {'title': {'type': '_empty', 'default': 'Euclidean Distance Matrix'}, 'color': {'type': '_empty', 'default': 'Euclidean Distance'}, 'xaxis_title': {'type': '_empty', 'default': 'Index'}, 'yaxis_title': {'type': '_empty', 'default': 'Index'}, 'color_scale': {'type': '_empty', 'default': 'Blues'}} | ['visualization', 'dimensionality_reduction', 'embeddings'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.embeddings.PCAComponentsPairwisePlots | PCA Components Pairwise Plots | Generates scatter plots for pairwise combinations of principal component analysis (PCA) components of model... | True | False | ['dataset', 'model'] | {'n_components': {'type': 'int', 'default': 3}} | ['visualization', 'dimensionality_reduction', 'embeddings'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.embeddings.StabilityAnalysisKeyword | Stability Analysis Keyword | Evaluates robustness of embedding models to keyword swaps in the test dataset.... | True | True | ['dataset', 'model'] | {'keyword_dict': {'type': 'Dict', 'default': None}, 'mean_similarity_threshold': {'type': 'float', 'default': 0.7}} | ['llm', 'text_data', 'embeddings', 'visualization'] | ['feature_extraction'] |

| validmind.model_validation.embeddings.StabilityAnalysisRandomNoise | Stability Analysis Random Noise | Assesses the robustness of text embeddings models to random noise introduced via text perturbations.... | True | True | ['dataset', 'model'] | {'probability': {'type': 'float', 'default': 0.02}, 'mean_similarity_threshold': {'type': 'float', 'default': 0.7}} | ['llm', 'text_data', 'embeddings', 'visualization'] | ['feature_extraction'] |

| validmind.model_validation.embeddings.StabilityAnalysisSynonyms | Stability Analysis Synonyms | Evaluates the stability of text embeddings models when words in test data are replaced by their synonyms randomly.... | True | True | ['dataset', 'model'] | {'probability': {'type': 'float', 'default': 0.02}, 'mean_similarity_threshold': {'type': 'float', 'default': 0.7}} | ['llm', 'text_data', 'embeddings', 'visualization'] | ['feature_extraction'] |

| validmind.model_validation.embeddings.StabilityAnalysisTranslation | Stability Analysis Translation | Evaluates robustness of text embeddings models to noise introduced by translating the original text to another... | True | True | ['dataset', 'model'] | {'source_lang': {'type': 'str', 'default': 'en'}, 'target_lang': {'type': 'str', 'default': 'fr'}, 'mean_similarity_threshold': {'type': 'float', 'default': 0.7}} | ['llm', 'text_data', 'embeddings', 'visualization'] | ['feature_extraction'] |

| validmind.model_validation.embeddings.TSNEComponentsPairwisePlots | TSNE Components Pairwise Plots | Creates scatter plots for pairwise combinations of t-SNE components to visualize embeddings and highlight potential... | True | False | ['dataset', 'model'] | {'n_components': {'type': 'int', 'default': 2}, 'perplexity': {'type': 'int', 'default': 30}, 'title': {'type': 'str', 'default': 't-SNE'}} | ['visualization', 'dimensionality_reduction', 'embeddings'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.ragas.AnswerCorrectness | Answer Correctness | Evaluates the correctness of answers in a dataset with respect to the provided ground... | True | True | ['dataset'] | {'user_input_column': {'type': 'str', 'default': 'user_input'}, 'response_column': {'type': 'str', 'default': 'response'}, 'reference_column': {'type': 'str', 'default': 'reference'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.ragas.AspectCritic | Aspect Critic | Evaluates generations against the following aspects: harmfulness, maliciousness,... | True | True | ['dataset'] | {'user_input_column': {'type': 'str', 'default': 'user_input'}, 'response_column': {'type': 'str', 'default': 'response'}, 'retrieved_contexts_column': {'type': 'Optional', 'default': None}, 'aspects': {'type': 'List', 'default': ['coherence', 'conciseness', 'correctness', 'harmfulness', 'maliciousness']}, 'additional_aspects': {'type': 'Optional', 'default': None}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm', 'qualitative'] | ['text_summarization', 'text_generation', 'text_qa'] |

| validmind.model_validation.ragas.ContextEntityRecall | Context Entity Recall | Evaluates the context entity recall for dataset entries and visualizes the results.... | True | True | ['dataset'] | {'retrieved_contexts_column': {'type': 'str', 'default': 'retrieved_contexts'}, 'reference_column': {'type': 'str', 'default': 'reference'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm', 'retrieval_performance'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.ragas.ContextPrecision | Context Precision | Context Precision is a metric that evaluates whether all of the ground-truth... | True | True | ['dataset'] | {'user_input_column': {'type': 'str', 'default': 'user_input'}, 'retrieved_contexts_column': {'type': 'str', 'default': 'retrieved_contexts'}, 'reference_column': {'type': 'str', 'default': 'reference'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm', 'retrieval_performance'] | ['text_qa', 'text_generation', 'text_summarization', 'text_classification'] |

| validmind.model_validation.ragas.ContextPrecisionWithoutReference | Context Precision Without Reference | Context Precision Without Reference is a metric used to evaluate the relevance of... | True | True | ['dataset'] | {'user_input_column': {'type': 'str', 'default': 'user_input'}, 'retrieved_contexts_column': {'type': 'str', 'default': 'retrieved_contexts'}, 'response_column': {'type': 'str', 'default': 'response'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm', 'retrieval_performance'] | ['text_qa', 'text_generation', 'text_summarization', 'text_classification'] |

| validmind.model_validation.ragas.ContextRecall | Context Recall | Context recall measures the extent to which the retrieved context aligns with the... | True | True | ['dataset'] | {'user_input_column': {'type': 'str', 'default': 'user_input'}, 'retrieved_contexts_column': {'type': 'str', 'default': 'retrieved_contexts'}, 'reference_column': {'type': 'str', 'default': 'reference'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm', 'retrieval_performance'] | ['text_qa', 'text_generation', 'text_summarization', 'text_classification'] |

| validmind.model_validation.ragas.Faithfulness | Faithfulness | Evaluates the faithfulness of the generated answers with respect to retrieved contexts.... | True | True | ['dataset'] | {'user_input_column': {'type': 'str', 'default': 'user_input'}, 'response_column': {'type': 'str', 'default': 'response'}, 'retrieved_contexts_column': {'type': 'str', 'default': 'retrieved_contexts'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm', 'rag_performance'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.ragas.NoiseSensitivity | Noise Sensitivity | Assesses the sensitivity of a Large Language Model (LLM) to noise in retrieved context by measuring how often it... | True | True | ['dataset'] | {'response_column': {'type': 'str', 'default': 'response'}, 'retrieved_contexts_column': {'type': 'str', 'default': 'retrieved_contexts'}, 'reference_column': {'type': 'str', 'default': 'reference'}, 'focus': {'type': 'str', 'default': 'relevant'}, 'user_input_column': {'type': 'str', 'default': 'user_input'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm', 'rag_performance'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.ragas.ResponseRelevancy | Response Relevancy | Assesses how pertinent the generated answer is to the given prompt.... | True | True | ['dataset'] | {'user_input_column': {'type': 'str', 'default': 'user_input'}, 'retrieved_contexts_column': {'type': 'str', 'default': None}, 'response_column': {'type': 'str', 'default': 'response'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm', 'rag_performance'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.ragas.SemanticSimilarity | Semantic Similarity | Calculates the semantic similarity between generated responses and ground truths... | True | True | ['dataset'] | {'response_column': {'type': 'str', 'default': 'response'}, 'reference_column': {'type': 'str', 'default': 'reference'}, 'judge_llm': {'type': '_empty', 'default': None}, 'judge_embeddings': {'type': '_empty', 'default': None}} | ['ragas', 'llm'] | ['text_qa', 'text_generation', 'text_summarization'] |

| validmind.model_validation.sklearn.AdjustedMutualInformation | Adjusted Mutual Information | Evaluates clustering model performance by measuring mutual information between true and predicted labels, adjusting... | False | True | ['model', 'dataset'] | {} | ['sklearn', 'model_performance', 'clustering'] | ['clustering'] |

| validmind.model_validation.sklearn.AdjustedRandIndex | Adjusted Rand Index | Measures the similarity between two data clusters using the Adjusted Rand Index (ARI) metric in clustering machine... | False | True | ['model', 'dataset'] | {} | ['sklearn', 'model_performance', 'clustering'] | ['clustering'] |

| validmind.model_validation.sklearn.CalibrationCurve | Calibration Curve | Evaluates the calibration of probability estimates by comparing predicted probabilities against observed... | True | False | ['model', 'dataset'] | {'n_bins': {'type': 'int', 'default': 10}} | ['sklearn', 'model_performance', 'classification'] | ['classification'] |

| validmind.model_validation.sklearn.ClassifierPerformance | Classifier Performance | Evaluates performance of binary or multiclass classification models using precision, recall, F1-Score, accuracy,... | False | True | ['dataset', 'model'] | {'average': {'type': 'str', 'default': 'macro'}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.ClassifierThresholdOptimization | Classifier Threshold Optimization | Analyzes and visualizes different threshold optimization methods for binary classification models.... | False | True | ['dataset', 'model'] | {'methods': {'type': 'Optional', 'default': None}, 'target_recall': {'type': 'Optional', 'default': None}} | ['model_validation', 'threshold_optimization', 'classification_metrics'] | ['classification'] |

| validmind.model_validation.sklearn.ClusterCosineSimilarity | Cluster Cosine Similarity | Measures the intra-cluster similarity of a clustering model using cosine similarity.... | False | True | ['model', 'dataset'] | {} | ['sklearn', 'model_performance', 'clustering'] | ['clustering'] |

| validmind.model_validation.sklearn.ClusterPerformanceMetrics | Cluster Performance Metrics | Evaluates the performance of clustering machine learning models using multiple established metrics.... | False | True | ['model', 'dataset'] | {} | ['sklearn', 'model_performance', 'clustering'] | ['clustering'] |

| validmind.model_validation.sklearn.CompletenessScore | Completeness Score | Evaluates a clustering model's capacity to categorize instances from a single class into the same cluster.... | False | True | ['model', 'dataset'] | {} | ['sklearn', 'model_performance', 'clustering'] | ['clustering'] |

| validmind.model_validation.sklearn.ConfusionMatrix | Confusion Matrix | Evaluates and visually represents the classification ML model's predictive performance using a Confusion Matrix... | True | False | ['dataset', 'model'] | {'threshold': {'type': 'float', 'default': 0.5}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance', 'visualization'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.FeatureImportance | Feature Importance | Compute feature importance scores for a given model and generate a summary table... | False | True | ['dataset', 'model'] | {'num_features': {'type': 'int', 'default': 3}} | ['model_explainability', 'sklearn'] | ['regression', 'time_series_forecasting'] |

| validmind.model_validation.sklearn.FowlkesMallowsScore | Fowlkes Mallows Score | Evaluates the similarity between predicted and actual cluster assignments in a model using the Fowlkes-Mallows... | False | True | ['dataset', 'model'] | {} | ['sklearn', 'model_performance'] | ['clustering'] |

| validmind.model_validation.sklearn.HomogeneityScore | Homogeneity Score | Assesses clustering homogeneity by comparing true and predicted labels, scoring from 0 (heterogeneous) to 1... | False | True | ['dataset', 'model'] | {} | ['sklearn', 'model_performance'] | ['clustering'] |

| validmind.model_validation.sklearn.HyperParametersTuning | Hyper Parameters Tuning | Performs exhaustive grid search over specified parameter ranges to find optimal model configurations... | False | True | ['model', 'dataset'] | {'param_grid': {'type': 'dict', 'default': None}, 'scoring': {'type': 'Union', 'default': None}, 'thresholds': {'type': 'Union', 'default': None}, 'fit_params': {'type': 'dict', 'default': None}} | ['sklearn', 'model_performance'] | ['clustering', 'classification'] |

| validmind.model_validation.sklearn.KMeansClustersOptimization | K Means Clusters Optimization | Optimizes the number of clusters in K-means models using Elbow and Silhouette methods.... | True | False | ['model', 'dataset'] | {'n_clusters': {'type': 'Optional', 'default': None}} | ['sklearn', 'model_performance', 'kmeans'] | ['clustering'] |

| validmind.model_validation.sklearn.MinimumAccuracy | Minimum Accuracy | Checks if the model's prediction accuracy meets or surpasses a specified threshold.... | False | True | ['dataset', 'model'] | {'min_threshold': {'type': 'float', 'default': 0.7}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.MinimumF1Score | Minimum F1 Score | Assesses if the model's F1 score on the validation set meets a predefined minimum threshold, ensuring balanced... | False | True | ['dataset', 'model'] | {'min_threshold': {'type': 'float', 'default': 0.5}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.MinimumROCAUCScore | Minimum ROCAUC Score | Validates model by checking if the ROC AUC score meets or surpasses a specified threshold.... | False | True | ['dataset', 'model'] | {'min_threshold': {'type': 'float', 'default': 0.5}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.ModelParameters | Model Parameters | Extracts and displays model parameters in a structured format for transparency and reproducibility.... | False | True | ['model'] | {'model_params': {'type': 'Optional', 'default': None}} | ['model_training', 'metadata'] | ['classification', 'regression'] |

| validmind.model_validation.sklearn.ModelsPerformanceComparison | Models Performance Comparison | Evaluates and compares the performance of multiple Machine Learning models using various metrics like accuracy,... | False | True | ['dataset', 'models'] | {} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance', 'model_comparison'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.OverfitDiagnosis | Overfit Diagnosis | Assesses potential overfitting in a model's predictions, identifying regions where performance between training and... | True | True | ['model', 'datasets'] | {'metric': {'type': 'str', 'default': None}, 'cut_off_threshold': {'type': 'float', 'default': 0.04}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'linear_regression', 'model_diagnosis'] | ['classification', 'regression'] |

| validmind.model_validation.sklearn.PermutationFeatureImportance | Permutation Feature Importance | Assesses the significance of each feature in a model by evaluating the impact on model performance when feature... | True | False | ['model', 'dataset'] | {'fontsize': {'type': 'Optional', 'default': None}, 'figure_height': {'type': 'Optional', 'default': None}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'feature_importance', 'visualization'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.PopulationStabilityIndex | Population Stability Index | Assesses the Population Stability Index (PSI) to quantify the stability of an ML model's predictions across... | True | True | ['datasets', 'model'] | {'num_bins': {'type': 'int', 'default': 10}, 'mode': {'type': 'str', 'default': 'fixed'}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.PrecisionRecallCurve | Precision Recall Curve | Evaluates the precision-recall trade-off for binary classification models and visualizes the Precision-Recall curve.... | True | False | ['model', 'dataset'] | {} | ['sklearn', 'binary_classification', 'model_performance', 'visualization'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.ROCCurve | ROC Curve | Evaluates binary classification model performance by generating and plotting the Receiver Operating Characteristic... | True | False | ['model', 'dataset'] | {} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance', 'visualization'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.RegressionErrors | Regression Errors | Assesses the performance and error distribution of a regression model using various error metrics.... | False | True | ['model', 'dataset'] | {} | ['sklearn', 'model_performance'] | ['regression', 'classification'] |

| validmind.model_validation.sklearn.RegressionErrorsComparison | Regression Errors Comparison | Assesses multiple regression error metrics to compare model performance across different datasets, emphasizing... | False | True | ['datasets', 'models'] | {} | ['model_performance', 'sklearn'] | ['regression', 'time_series_forecasting'] |

| validmind.model_validation.sklearn.RegressionPerformance | Regression Performance | Evaluates the performance of a regression model using five different metrics: MAE, MSE, RMSE, MAPE, and MBD.... | False | True | ['model', 'dataset'] | {} | ['sklearn', 'model_performance'] | ['regression'] |

| validmind.model_validation.sklearn.RegressionR2Square | Regression R2 Square | Assesses the overall goodness-of-fit of a regression model by evaluating R-squared (R2) and Adjusted R-squared (Adj... | False | True | ['dataset', 'model'] | {} | ['sklearn', 'model_performance'] | ['regression'] |

| validmind.model_validation.sklearn.RegressionR2SquareComparison | Regression R2 Square Comparison | Compares R-Squared and Adjusted R-Squared values for different regression models across multiple datasets to assess... | False | True | ['datasets', 'models'] | {} | ['model_performance', 'sklearn'] | ['regression', 'time_series_forecasting'] |

| validmind.model_validation.sklearn.RobustnessDiagnosis | Robustness Diagnosis | Assesses the robustness of a machine learning model by evaluating performance decay under noisy conditions.... | True | True | ['datasets', 'model'] | {'metric': {'type': 'str', 'default': None}, 'scaling_factor_std_dev_list': {'type': 'List', 'default': [0.1, 0.2, 0.3, 0.4, 0.5]}, 'performance_decay_threshold': {'type': 'float', 'default': 0.05}} | ['sklearn', 'model_diagnosis', 'visualization'] | ['classification', 'regression'] |

| validmind.model_validation.sklearn.SHAPGlobalImportance | SHAP Global Importance | Evaluates and visualizes global feature importance using SHAP values for model explanation and risk identification.... | False | True | ['model', 'dataset'] | {'kernel_explainer_samples': {'type': 'int', 'default': 10}, 'tree_or_linear_explainer_samples': {'type': 'int', 'default': 200}, 'class_of_interest': {'type': 'Optional', 'default': None}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'feature_importance', 'visualization'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.ScoreProbabilityAlignment | Score Probability Alignment | Analyzes the alignment between credit scores and predicted probabilities.... | True | True | ['model', 'dataset'] | {'score_column': {'type': 'str', 'default': 'score'}, 'n_bins': {'type': 'int', 'default': 10}} | ['visualization', 'credit_risk', 'calibration'] | ['classification'] |

| validmind.model_validation.sklearn.SilhouettePlot | Silhouette Plot | Calculates and visualizes Silhouette Score, assessing the degree of data point suitability to its cluster in ML... | True | True | ['model', 'dataset'] | {} | ['sklearn', 'model_performance'] | ['clustering'] |

| validmind.model_validation.sklearn.TrainingTestDegradation | Training Test Degradation | Tests if model performance degradation between training and test datasets exceeds a predefined threshold.... | False | True | ['datasets', 'model'] | {'max_threshold': {'type': 'float', 'default': 0.1}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance', 'visualization'] | ['classification', 'text_classification'] |

| validmind.model_validation.sklearn.VMeasure | V Measure | Evaluates homogeneity and completeness of a clustering model using the V Measure Score.... | False | True | ['dataset', 'model'] | {} | ['sklearn', 'model_performance'] | ['clustering'] |

| validmind.model_validation.sklearn.WeakspotsDiagnosis | Weakspots Diagnosis | Identifies and visualizes weak spots in a machine learning model's performance across various sections of the... | True | True | ['datasets', 'model'] | {'features_columns': {'type': 'Optional', 'default': None}, 'metrics': {'type': 'Optional', 'default': None}, 'thresholds': {'type': 'Optional', 'default': None}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_diagnosis', 'visualization'] | ['classification', 'text_classification'] |

| validmind.model_validation.statsmodels.AutoARIMA | Auto ARIMA | Evaluates ARIMA models for time-series forecasting, ranking them using Bayesian and Akaike Information Criteria.... | False | True | ['model', 'dataset'] | {} | ['time_series_data', 'forecasting', 'model_selection', 'statsmodels'] | ['regression'] |

| validmind.model_validation.statsmodels.CumulativePredictionProbabilities | Cumulative Prediction Probabilities | Visualizes cumulative probabilities of positive and negative classes in classification models.... | True | False | ['dataset', 'model'] | {'title': {'type': 'str', 'default': 'Cumulative Probabilities'}} | ['visualization', 'credit_risk'] | ['classification'] |

| validmind.model_validation.statsmodels.DurbinWatsonTest | Durbin Watson Test | Assesses autocorrelation in time series data features using the Durbin-Watson statistic.... | False | True | ['dataset', 'model'] | {'threshold': {'type': 'List', 'default': [1.5, 2.5]}} | ['time_series_data', 'forecasting', 'statistical_test', 'statsmodels'] | ['regression'] |

| validmind.model_validation.statsmodels.GINITable | GINI Table | Evaluates classification model performance using AUC, GINI, and KS metrics for training and test datasets.... | False | True | ['dataset', 'model'] | {} | ['model_performance'] | ['classification'] |

| validmind.model_validation.statsmodels.KolmogorovSmirnov | Kolmogorov Smirnov | Assesses whether each feature in the dataset aligns with a normal distribution using the Kolmogorov-Smirnov test.... | False | True | ['model', 'dataset'] | {'dist': {'type': 'str', 'default': 'norm'}} | ['tabular_data', 'data_distribution', 'statistical_test', 'statsmodels'] | ['classification', 'regression'] |

| validmind.model_validation.statsmodels.Lilliefors | Lilliefors | Assesses the normality of feature distributions in an ML model's training dataset using the Lilliefors test.... | False | True | ['dataset'] | {} | ['tabular_data', 'data_distribution', 'statistical_test', 'statsmodels'] | ['classification', 'regression'] |

| validmind.model_validation.statsmodels.PredictionProbabilitiesHistogram | Prediction Probabilities Histogram | Assesses the predictive probability distribution for binary classification to evaluate model performance and... | True | False | ['dataset', 'model'] | {'title': {'type': 'str', 'default': 'Histogram of Predictive Probabilities'}} | ['visualization', 'credit_risk'] | ['classification'] |

| validmind.model_validation.statsmodels.RegressionCoeffs | Regression Coeffs | Assesses the significance and uncertainty of predictor variables in a regression model through visualization of... | True | True | ['model'] | {} | ['tabular_data', 'visualization', 'model_training'] | ['regression'] |

| validmind.model_validation.statsmodels.RegressionFeatureSignificance | Regression Feature Significance | Assesses and visualizes the statistical significance of features in a regression model.... | True | False | ['model'] | {'fontsize': {'type': 'int', 'default': 10}, 'p_threshold': {'type': 'float', 'default': 0.05}} | ['statistical_test', 'model_interpretation', 'visualization', 'feature_importance'] | ['regression'] |

| validmind.model_validation.statsmodels.RegressionModelForecastPlot | Regression Model Forecast Plot | Generates plots to visually compare the forecasted outcomes of a regression model against actual observed values over... | True | False | ['model', 'dataset'] | {'start_date': {'type': 'Optional', 'default': None}, 'end_date': {'type': 'Optional', 'default': None}} | ['time_series_data', 'forecasting', 'visualization'] | ['regression'] |

| validmind.model_validation.statsmodels.RegressionModelForecastPlotLevels | Regression Model Forecast Plot Levels | Assesses the alignment between forecasted and observed values in regression models through visual plots... | True | False | ['model', 'dataset'] | {} | ['time_series_data', 'forecasting', 'visualization'] | ['regression'] |

| validmind.model_validation.statsmodels.RegressionModelSensitivityPlot | Regression Model Sensitivity Plot | Assesses the sensitivity of a regression model to changes in independent variables by applying shocks and... | True | False | ['dataset', 'model'] | {'shocks': {'type': 'List', 'default': [0.1]}, 'transformation': {'type': 'Optional', 'default': None}} | ['senstivity_analysis', 'visualization'] | ['regression'] |

| validmind.model_validation.statsmodels.RegressionModelSummary | Regression Model Summary | Evaluates regression model performance using metrics including R-Squared, Adjusted R-Squared, MSE, and RMSE.... | False | True | ['dataset', 'model'] | {} | ['model_performance', 'regression'] | ['regression'] |

| validmind.model_validation.statsmodels.RegressionPermutationFeatureImportance | Regression Permutation Feature Importance | Assesses the significance of each feature in a model by evaluating the impact on model performance when feature... | True | False | ['dataset', 'model'] | {'fontsize': {'type': 'int', 'default': 12}, 'figure_height': {'type': 'int', 'default': 500}} | ['statsmodels', 'feature_importance', 'visualization'] | ['regression'] |

| validmind.model_validation.statsmodels.ScorecardHistogram | Scorecard Histogram | The Scorecard Histogram test evaluates the distribution of credit scores between default and non-default instances,... | True | False | ['dataset'] | {'title': {'type': 'str', 'default': 'Histogram of Scores'}, 'score_column': {'type': 'str', 'default': 'score'}} | ['visualization', 'credit_risk', 'logistic_regression'] | ['classification'] |

| validmind.ongoing_monitoring.CalibrationCurveDrift | Calibration Curve Drift | Evaluates changes in probability calibration between reference and monitoring datasets.... | True | True | ['datasets', 'model'] | {'n_bins': {'type': 'int', 'default': 10}, 'drift_pct_threshold': {'type': 'float', 'default': 20}} | ['sklearn', 'binary_classification', 'model_performance', 'visualization'] | ['classification', 'text_classification'] |

| validmind.ongoing_monitoring.ClassDiscriminationDrift | Class Discrimination Drift | Compares classification discrimination metrics between reference and monitoring datasets.... | False | True | ['datasets', 'model'] | {'drift_pct_threshold': {'type': '_empty', 'default': 20}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance'] | ['classification', 'text_classification'] |

| validmind.ongoing_monitoring.ClassImbalanceDrift | Class Imbalance Drift | Evaluates drift in class distribution between reference and monitoring datasets.... | True | True | ['datasets'] | {'drift_pct_threshold': {'type': 'float', 'default': 5.0}, 'title': {'type': 'str', 'default': 'Class Distribution Drift'}} | ['tabular_data', 'binary_classification', 'multiclass_classification'] | ['classification'] |

| validmind.ongoing_monitoring.ClassificationAccuracyDrift | Classification Accuracy Drift | Compares classification accuracy metrics between reference and monitoring datasets.... | False | True | ['datasets', 'model'] | {'drift_pct_threshold': {'type': '_empty', 'default': 20}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance'] | ['classification', 'text_classification'] |

| validmind.ongoing_monitoring.ConfusionMatrixDrift | Confusion Matrix Drift | Compares confusion matrix metrics between reference and monitoring datasets.... | False | True | ['datasets', 'model'] | {'drift_pct_threshold': {'type': '_empty', 'default': 20}} | ['sklearn', 'binary_classification', 'multiclass_classification', 'model_performance'] | ['classification', 'text_classification'] |

| validmind.ongoing_monitoring.CumulativePredictionProbabilitiesDrift | Cumulative Prediction Probabilities Drift | Compares cumulative prediction probability distributions between reference and monitoring datasets.... | True | False | ['datasets', 'model'] | {} | ['visualization', 'credit_risk'] | ['classification'] |

| validmind.ongoing_monitoring.FeatureDrift | Feature Drift | Evaluates changes in feature distribution over time to identify potential model drift.... | True | True | ['datasets'] | {'bins': {'type': '_empty', 'default': [0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9]}, 'feature_columns': {'type': '_empty', 'default': None}, 'psi_threshold': {'type': '_empty', 'default': 0.2}} | ['visualization'] | ['monitoring'] |

| validmind.ongoing_monitoring.PredictionAcrossEachFeature | Prediction Across Each Feature | Assesses differences in model predictions across individual features between reference and monitoring datasets... | True | False | ['datasets', 'model'] | {} | ['visualization'] | ['monitoring'] |

| validmind.ongoing_monitoring.PredictionCorrelation | Prediction Correlation | Assesses correlation changes between model predictions from reference and monitoring datasets to detect potential... | True | True | ['datasets', 'model'] | {'drift_pct_threshold': {'type': 'float', 'default': 20}} | ['visualization'] | ['monitoring'] |

| validmind.ongoing_monitoring.PredictionProbabilitiesHistogramDrift | Prediction Probabilities Histogram Drift | Compares prediction probability distributions between reference and monitoring datasets.... | True | True | ['datasets', 'model'] | {'title': {'type': '_empty', 'default': 'Prediction Probabilities Histogram Drift'}, 'drift_pct_threshold': {'type': 'float', 'default': 20.0}} | ['visualization', 'credit_risk'] | ['classification'] |

| validmind.ongoing_monitoring.PredictionQuantilesAcrossFeatures | Prediction Quantiles Across Features | Assesses differences in model prediction distributions across individual features between reference... | True | False | ['datasets', 'model'] | {} | ['visualization'] | ['monitoring'] |

| validmind.ongoing_monitoring.ROCCurveDrift | ROC Curve Drift | Compares ROC curves between reference and monitoring datasets.... | True | False | ['datasets', 'model'] | {} | ['sklearn', 'binary_classification', 'model_performance', 'visualization'] | ['classification', 'text_classification'] |

| validmind.ongoing_monitoring.ScoreBandsDrift | Score Bands Drift | Analyzes drift in population distribution and default rates across score bands.... | False | True | ['datasets', 'model'] | {'score_column': {'type': 'str', 'default': 'score'}, 'score_bands': {'type': 'list', 'default': None}, 'drift_threshold': {'type': 'float', 'default': 20.0}} | ['visualization', 'credit_risk', 'scorecard'] | ['classification'] |

| validmind.ongoing_monitoring.ScorecardHistogramDrift | Scorecard Histogram Drift | Compares score distributions between reference and monitoring datasets for each class.... | True | True | ['datasets'] | {'score_column': {'type': 'str', 'default': 'score'}, 'title': {'type': 'str', 'default': 'Scorecard Histogram Drift'}, 'drift_pct_threshold': {'type': 'float', 'default': 20.0}} | ['visualization', 'credit_risk', 'logistic_regression'] | ['classification'] |

| validmind.ongoing_monitoring.TargetPredictionDistributionPlot | Target Prediction Distribution Plot | Assesses differences in prediction distributions between a reference dataset and a monitoring dataset to identify... | True | True | ['datasets', 'model'] | {'drift_pct_threshold': {'type': 'float', 'default': 20}} | ['visualization'] | ['monitoring'] |

| validmind.plots.BoxPlot | Box Plot | Generates customizable box plots for numerical features in a dataset with optional grouping using Plotly.... | True | False | ['dataset'] | {'columns': {'type': 'Optional', 'default': None}, 'group_by': {'type': 'Optional', 'default': None}, 'width': {'type': 'int', 'default': 1800}, 'height': {'type': 'int', 'default': 1200}, 'colors': {'type': 'Optional', 'default': None}, 'show_outliers': {'type': 'bool', 'default': True}, 'title_prefix': {'type': 'str', 'default': 'Box Plot of'}} | ['tabular_data', 'visualization', 'data_quality'] | ['classification', 'regression', 'clustering'] |

| validmind.plots.CorrelationHeatmap | Correlation Heatmap | Generates customizable correlation heatmap plots for numerical features in a dataset using Plotly.... | True | False | ['dataset'] | {'columns': {'type': 'Optional', 'default': None}, 'method': {'type': 'str', 'default': 'pearson'}, 'show_values': {'type': 'bool', 'default': True}, 'colorscale': {'type': 'str', 'default': 'RdBu'}, 'width': {'type': 'int', 'default': 800}, 'height': {'type': 'int', 'default': 600}, 'mask_upper': {'type': 'bool', 'default': False}, 'threshold': {'type': 'Optional', 'default': None}, 'title': {'type': 'str', 'default': 'Correlation Heatmap'}} | ['tabular_data', 'visualization', 'correlation'] | ['classification', 'regression', 'clustering'] |

| validmind.plots.HistogramPlot | Histogram Plot | Generates customizable histogram plots for numerical features in a dataset using Plotly.... | True | False | ['dataset'] | {'columns': {'type': 'Optional', 'default': None}, 'bins': {'type': 'Union', 'default': 30}, 'color': {'type': 'str', 'default': 'steelblue'}, 'opacity': {'type': 'float', 'default': 0.7}, 'show_kde': {'type': 'bool', 'default': True}, 'normalize': {'type': 'bool', 'default': False}, 'log_scale': {'type': 'bool', 'default': False}, 'title_prefix': {'type': 'str', 'default': 'Histogram of'}, 'width': {'type': 'int', 'default': 1200}, 'height': {'type': 'int', 'default': 800}, 'n_cols': {'type': 'int', 'default': 2}, 'vertical_spacing': {'type': 'float', 'default': 0.15}, 'horizontal_spacing': {'type': 'float', 'default': 0.1}} | ['tabular_data', 'visualization', 'data_quality'] | ['classification', 'regression', 'clustering'] |

| validmind.plots.ViolinPlot | Violin Plot | Generates interactive violin plots for numerical features using Plotly.... | False | False | ['dataset'] | {'columns': {'type': 'Optional', 'default': None}, 'group_by': {'type': 'Optional', 'default': None}, 'width': {'type': 'int', 'default': 800}, 'height': {'type': 'int', 'default': 600}} | ['tabular_data', 'visualization', 'distribution'] | ['classification', 'regression', 'clustering'] |

| validmind.prompt_validation.Bias | Bias | Assesses potential bias in a Large Language Model by analyzing the distribution and order of exemplars in the... | False | True | ['model'] | {'min_threshold': {'type': '_empty', 'default': 7}, 'judge_llm': {'type': '_empty', 'default': None}} | ['llm', 'few_shot'] | ['text_classification', 'text_summarization'] |

| validmind.prompt_validation.Clarity | Clarity | Evaluates and scores the clarity of prompts in a Large Language Model based on specified guidelines.... | False | True | ['model'] | {'min_threshold': {'type': '_empty', 'default': 7}, 'judge_llm': {'type': '_empty', 'default': None}} | ['llm', 'zero_shot', 'few_shot'] | ['text_classification', 'text_summarization'] |

| validmind.prompt_validation.Conciseness | Conciseness | Analyzes and grades the conciseness of prompts provided to a Large Language Model.... | False | True | ['model'] | {'min_threshold': {'type': '_empty', 'default': 7}, 'judge_llm': {'type': '_empty', 'default': None}} | ['llm', 'zero_shot', 'few_shot'] | ['text_classification', 'text_summarization'] |

| validmind.prompt_validation.Delimitation | Delimitation | Evaluates the proper use of delimiters in prompts provided to Large Language Models.... | False | True | ['model'] | {'min_threshold': {'type': '_empty', 'default': 7}, 'judge_llm': {'type': '_empty', 'default': None}} | ['llm', 'zero_shot', 'few_shot'] | ['text_classification', 'text_summarization'] |

| validmind.prompt_validation.NegativeInstruction | Negative Instruction | Evaluates and grades the use of affirmative, proactive language over negative instructions in LLM prompts.... | False | True | ['model'] | {'min_threshold': {'type': '_empty', 'default': 7}, 'judge_llm': {'type': '_empty', 'default': None}} | ['llm', 'zero_shot', 'few_shot'] | ['text_classification', 'text_summarization'] |

| validmind.prompt_validation.Robustness | Robustness | Assesses the robustness of prompts provided to a Large Language Model under varying conditions and contexts. This test... | False | True | ['model', 'dataset'] | {'num_tests': {'type': '_empty', 'default': 10}, 'judge_llm': {'type': '_empty', 'default': None}} | ['llm', 'zero_shot', 'few_shot'] | ['text_classification', 'text_summarization'] |

| validmind.prompt_validation.Specificity | Specificity | Evaluates and scores the specificity of prompts provided to a Large Language Model (LLM), based on clarity, detail,... | False | True | ['model'] | {'min_threshold': {'type': '_empty', 'default': 7}, 'judge_llm': {'type': '_empty', 'default': None}} | ['llm', 'zero_shot', 'few_shot'] | ['text_classification', 'text_summarization'] |