%pip install -q validmindQuickstart for model validation

Learn the basics of using ValidMind to validate models as part of a model validation workflow. Set up the ValidMind Library in your environment, and generate a draft of a validation report using ValidMind tests for a binary classification model.

To validate a model with the ValidMind Library, we'll:

- Import a sample dataset and preprocess it, then split the datasets and initialize them for use with ValidMind

- Independently verify data quality tests performed on datasets by model development

- Import a champion model for evaluation

- Run model evaluation tests with the ValidMind Library, which will send the results of those tests to the ValidMind Platform

Introduction

Model validation aims to independently assess the compliance of champion models created by model developers with regulatory guidance by conducting thorough testing and analysis, potentially including the use of challenger models to benchmark performance. Assessments, presented in the form of a validation report, typically include artifacts (findings) and recommendations to address those issues.

A binary classification model is a type of predictive model used in churn analysis to identify customers who are likely to leave a service or subscription by analyzing various behavioral, transactional, and demographic factors.

- This model helps businesses take proactive measures to retain at-risk customers by offering personalized incentives, improving customer service, or adjusting pricing strategies.

- Effective validation of a churn prediction model ensures that businesses can accurately identify potential churners, optimize retention efforts, and enhance overall customer satisfaction while minimizing revenue loss.

About ValidMind

ValidMind is a suite of tools for managing model risk, including risk associated with AI and statistical models.

You use the ValidMind Library to automate comparison and other validation tests, and then use the ValidMind Platform to submit compliance assessments of champion models via comprehensive validation reports. Together, these products simplify model risk management, facilitate compliance with regulations and institutional standards, and enhance collaboration between yourself and model developers.

Before you begin

This notebook assumes you have basic familiarity with Python, including an understanding of how functions work. If you are new to Python, you can still run the notebook but we recommend further familiarizing yourself with the language.

If you encounter errors due to missing modules in your Python environment, install the modules with pip install, and then re-run the notebook. For more help, refer to Installing Python Modules.

New to ValidMind?

If you haven't already seen our documentation on the ValidMind Library, we recommend you begin by exploring the available resources in this section. There, you can learn more about documenting models and running tests, as well as find code samples and our Python Library API reference.

Register with ValidMind

Key concepts

Validation report: A comprehensive and structured assessment of a model’s development and performance, focusing on verifying its integrity, appropriateness, and alignment with its intended use. It includes analyses of model assumptions, data quality, performance metrics, outcomes of testing procedures, and risk considerations. The validation report supports transparency, regulatory compliance, and informed decision-making by documenting the validator’s independent review and conclusions.

Validation report template: Serves as a standardized framework for conducting and documenting model validation activities. It outlines the required sections, recommended analyses, and expected validation tests, ensuring consistency and completeness across validation reports. The template helps guide validators through a systematic review process while promoting comparability and traceability of validation outcomes.

Tests: A function contained in the ValidMind Library, designed to run a specific quantitative test on the dataset or model. Tests are the building blocks of ValidMind, used to evaluate and document models and datasets.

Metrics: A subset of tests that do not have thresholds. In the context of this notebook, metrics and tests can be thought of as interchangeable concepts.

Custom metrics: Custom metrics are functions that you define to evaluate your model or dataset. These functions can be registered with the ValidMind Library to be used in the ValidMind Platform.

Inputs: Objects to be evaluated and documented in the ValidMind Library. They can be any of the following:

- model: A single model that has been initialized in ValidMind with

vm.init_model(). - dataset: Single dataset that has been initialized in ValidMind with

vm.init_dataset(). - models: A list of ValidMind models - usually this is used when you want to compare multiple models in your custom metric.

- datasets: A list of ValidMind datasets - usually this is used when you want to compare multiple datasets in your custom metric. (Learn more: Run tests with multiple datasets)

Parameters: Additional arguments that can be passed when running a ValidMind test, used to pass additional information to a metric, customize its behavior, or provide additional context.

Outputs: Custom metrics can return elements like tables or plots. Tables may be a list of dictionaries (each representing a row) or a pandas DataFrame. Plots may be matplotlib or plotly figures.

Setting up

Register a sample model

In a usual model lifecycle, a champion model will have been independently registered in your model inventory and submitted to you for validation by your model development team as part of the effective challenge process. (Learn more: Submit for approval)

For this notebook, we'll have you register a dummy model in the ValidMind Platform inventory and assign yourself as the validator to familiarize you with the ValidMind interface and circumvent the need for an existing model:

In a browser, log in to ValidMind.

In the left sidebar, navigate to Inventory and click + Register Model.

Enter the model details and click Next > to continue to assignment of model stakeholders. (Need more help?)

Select your own name under the MODEL OWNER drop-down — don’t worry, we’ll adjust these permissions next for validation.

Click Register Model to add the model to your inventory.

Assign validator credentials

In order to log tests as a validator instead of as a developer, on the model details page that appears after you've successfully registered your sample model:

Remove yourself as a model owner:

- Click on the OWNERS tile.

- Click the x next to your name to remove yourself from that model's role.

- Click Save to apply your changes to that role.

Remove yourself as a developer:

- Click on the DEVELOPERS tile.

- Click the x next to your name to remove yourself from that model's role.

- Click Save to apply your changes to that role.

Add yourself as a validator:

- Click on the VALIDATORS tile.

- Select your name from the drop-down menu.

- Click Save to apply your changes to that role.

Apply validation report template

Next, let's select a validation report template. A template predefines sections for your report and provides a general outline to follow, making the validation process much easier.

In the left sidebar that appears for your model, click Documents and select Validation.

Under TEMPLATE, select

Generic Validation Report.Click Use Template to apply the template.

Install the ValidMind Library

Python 3.8 <= x <= 3.14

To install the library:

Initialize the ValidMind Library

Get your code snippet

Initialize the ValidMind Library with the code snippet unique to each model per document, ensuring your test results are uploaded to the correct model and automatically populated in the right document in the ValidMind Platform when you run this notebook.

- On the left sidebar that appears for your model, select Getting Started and select

Validationfrom the DOCUMENT drop-down menu. - Click Copy snippet to clipboard.

- Next, load your model identifier credentials from an

.envfile or replace the placeholder with your own code snippet:

# Load your model identifier credentials from an `.env` file

%load_ext dotenv

%dotenv .env

# Or replace with your code snippet

import validmind as vm

vm.init(

# api_host="...",

# api_key="...",

# api_secret="...",

# model="...",

document="validation-report",

)Initialize the Python environment

Then, let's import the necessary libraries and set up your Python environment for data analysis by enabling matplotlib, a plotting library used for visualizing data.

This ensures that any plots you generate will render inline in our notebook output rather than opening in a separate window:

%matplotlib inlineGetting to know ValidMind

Preview the validation report template

Let's verify that you have connected the ValidMind Library to the ValidMind Platform and that the appropriate template is selected for model validation. A template predefines sections for your validation report and provides a general outline to follow, making the validation process much easier.

You will attach evidence to this template in the form of risk assessment notes, artifacts, and test results later on. For now, take a look at the default structure that the template provides with the vm.preview_template() function from the ValidMind library:

vm.preview_template()View validation report in the ValidMind Platform

Next, let's head to the ValidMind Platform to see the template in action:

In a browser, log in to ValidMind.

In the left sidebar, navigate to Inventory and select the model you registered for this notebook.

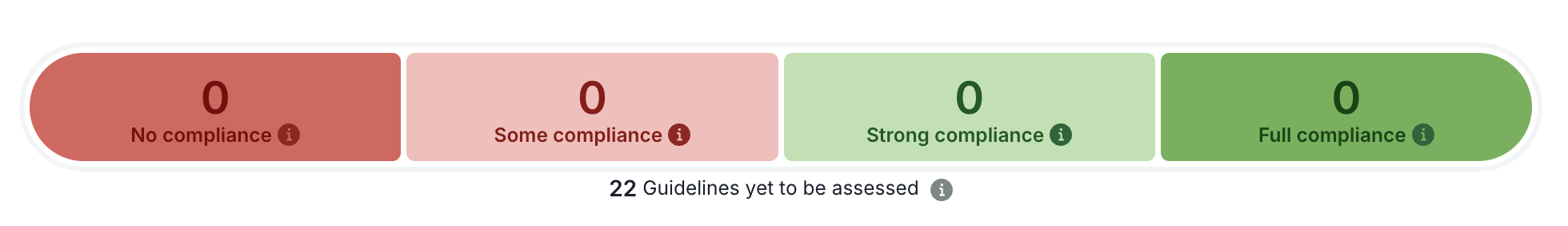

Click Validation under Documents for your model and note:

Importing the sample dataset

Load the sample dataset

First, let's import the public Bank Customer Churn Prediction dataset from Kaggle, which was used to develop the dummy champion model.

We'll use this dataset to review steps that should have been conducted during the initial development and documentation of the model to ensure that the model was built correctly. By independently performing steps taken by the model development team, we can confirm whether the model was built using appropriate and properly processed data.

In our below example, note that:

- The target column,

Exitedhas a value of1when a customer has churned and0otherwise. - The ValidMind Library provides a wrapper to automatically load the dataset as a Pandas DataFrame object. A Pandas Dataframe is a two-dimensional tabular data structure that makes use of rows and columns.

from validmind.datasets.classification import customer_churn

print(

f"Loaded demo dataset with: \n\n\t• Target column: '{customer_churn.target_column}' \n\t• Class labels: {customer_churn.class_labels}"

)

raw_df = customer_churn.load_data()

raw_df.head()Preprocess the raw dataset

Let's say that thanks to the documentation submitted by the model development team (Learn more ...), we know that the sample dataset was first preprocessed before being used to train the champion model.

During model validation, we use the same data processing logic and training procedure to confirm that the model's results can be reproduced independently, so let's also start by preprocessing our imported dataset to verify that preprocessing was done correctly. This involves splitting the data and separating the features (inputs) from the targets (outputs).

Split the dataset

Splitting our dataset helps assess how well the model generalizes to unseen data.

Use preprocess() to split our dataset into three subsets:

- train_df — Used to train the model.

- validation_df — Used to evaluate the model's performance during training.

- test_df — Used later on to asses the model's performance on new, unseen data.

train_df, validation_df, test_df = customer_churn.preprocess(raw_df)Separate features and targets

To train the model, we need to provide it with:

- Inputs — Features such as customer age, usage, etc.

- Outputs (Expected answers/labels) — in our case, we would like to know whether the customer churned or not.

Here, we'll use x_train to hold the input features, and y_train to hold the target variable — the values we want the model to predict:

x_train = train_df.drop(customer_churn.target_column, axis=1)

y_train = train_df[customer_churn.target_column]Running data quality tests

With everything ready to go, let's explore some of ValidMind's available tests to help us assess the quality of our datasets. Using ValidMind’s repository of tests streamlines your validation testing, and helps you ensure that your models are being validated appropriately.

Identify qualitative tests

We want to narrow down the tests we want to run from the selection provided by ValidMind, so we'll use the vm.tests.list_tasks_and_tags() function to list which tags are associated with each task type:

tasksrepresent the kind of modeling task associated with a test. Here we'll focus onclassificationtasks.tagsare free-form descriptions providing more details about the test, for example, what category the test falls into. Here we'll focus on thedata_qualitytag.

vm.tests.list_tasks_and_tags()Then we'll call the vm.tests.list_tests() function to list all the data quality tests for classification:

vm.tests.list_tests(

tags=["data_quality"], task="classification"

)Initialize the ValidMind datasets

Before you can run tests with your preprocessed datasets, you must first initialize a ValidMind Dataset object using the init_dataset function from the ValidMind (vm) module. This step is always necessary every time you want to connect a dataset to documentation and produce test results through ValidMind, but you only need to do it once per dataset.

For this example, we'll pass in the following arguments:

dataset— The raw dataset that you want to provide as input to tests.input_id— A unique identifier that allows tracking what inputs are used when running each individual test.target_column— A required argument if tests require access to true values. This is the name of the target column in the dataset.class_labels— An optional value to map predicted classes to class labels.

# Initialize the raw dataset

vm_raw_dataset = vm.init_dataset(

dataset=raw_df,

input_id="raw_dataset",

target_column=customer_churn.target_column,

class_labels=customer_churn.class_labels,

)

# Initialize the training dataset

vm_train_ds = vm.init_dataset(

dataset=train_df,

input_id="train_dataset",

target_column=customer_churn.target_column,

)

# Initialize the validation dataset

vm_validation_ds = vm.init_dataset(

dataset=validation_df,

input_id="validation_dataset",

target_column=customer_churn.target_column,

)

# Initialize the testing dataset

vm_test_ds = vm.init_dataset(

dataset=test_df,

input_id="test_dataset",

target_column=customer_churn.target_column

)Run an individual data quality test

Next, we'll use our previously initialized raw dataset (vm_raw_dataset) as input to run an individual test, then log the result to the ValidMind Platform.

- You run validation tests by calling the

run_testfunction provided by thevalidmind.testsmodule. - Every test result returned by the

run_test()function has a.log()method that can be used to send the test results to the ValidMind Platform.

Here, we'll use the ClassImbalance test as an example:

vm.tests.run_test(

test_id="validmind.data_validation.ClassImbalance",

inputs={

"dataset": vm_raw_dataset

}

).log()That's expected, as when we run validations tests the results logged need to be manually added to your report as part of your compliance assessment process within the ValidMind Platform. You'll continue to see this message throughout this notebook as we run and log more tests.

Run data comparison tests

We can also use ValidMind to perform comparison tests between our datasets, again logging the results to the ValidMind Platform. Below, we'll perform two sets of comparison tests with a mix of our datasets and the same class imbalance test:

- When running individual tests, you can use a custom

result_idto tag the individual result with a unique identifier, appended to thetest_idwith a:separator. - We can specify all the tests we'd ike to run in a dictionary called

test_config, and we'll pass in aninput_gridof individual test inputs to compare. In this case, we'll input our two datasets for comparison. Note here that theinput_gridexpects theinput_idof the dataset as the value rather than the variable name we specified.

# Individual test config with inputs specified

test_config = {

# Comparison between training and testing datasets to check if class balance is the same in both sets

"validmind.data_validation.ClassImbalance:train_vs_validation": {

"input_grid": {"dataset": ["train_dataset", "validation_dataset"]}

},

# Comparison between training and testing datasets to confirm that both sets have similar class distributions

"validmind.data_validation.ClassImbalance:train_vs_test": {

"input_grid": {"dataset": ["train_dataset", "test_dataset"]},

},

}Then batch run and log our tests in test_config:

for t in test_config:

print(t)

try:

# Check if test has input_grid

if 'input_grid' in test_config[t]:

# For tests with input_grid, pass the input_grid configuration

if 'params' in test_config[t]:

vm.tests.run_test(t, input_grid=test_config[t]['input_grid'], params=test_config[t]['params']).log()

else:

vm.tests.run_test(t, input_grid=test_config[t]['input_grid']).log()

else:

# Original logic for regular inputs

if 'params' in test_config[t]:

vm.tests.run_test(t, inputs=test_config[t]['inputs'], params=test_config[t]['params']).log()

else:

vm.tests.run_test(t, inputs=test_config[t]['inputs']).log()

except Exception as e:

print(f"Error running test {t}: {str(e)}")Importing the champion model

With our raw dataset preprocessed, let's go ahead and import the champion model submitted by the model development team in the format of a .pkl file: xgboost_model_champion.pkl

# Import the champion model

import joblib

xgboost = joblib.load("xgboost_model_champion.pkl")Initialize a model object

In addition to the initialized datasets, you'll also need to initialize a ValidMind model object (vm_model) that can be passed to other functions for analysis and tests on the data for our champion model.

You simply initialize this model object with vm.init_model():

# Initialize the champion XGBoost model

vm_xgboost = vm.init_model(

xgboost,

input_id="xgboost_champion",

)Assign predictions

Once the model has been registered, you can assign model predictions to the training and testing datasets.

- The

assign_predictions()method from theDatasetobject can link existing predictions to any number of models. - This method links the model's class prediction values and probabilities to our

vm_train_dsandvm_test_dsdatasets.

If no prediction values are passed, the method will compute predictions automatically:

vm_train_ds.assign_predictions(

model=vm_xgboost,

)

vm_test_ds.assign_predictions(

model=vm_xgboost,

)Running model evaluation tests

With our setup complete, let's run the rest of our validation tests. Since we have already verified the data quality of the dataset used to train our champion model, we will now focus on evaluating the model's performance.

Run model performance tests

First, let's run some performance tests. Use vm.tests.list_tests() to identify all the model performance tests for classification:

vm.tests.list_tests(tags=["model_performance"], task="classification")We'll isolate the specific tests we want to run in mpt, and append an identifier for our champion model here to the result_id with a : separator like we did above in another test:

mpt = [

"validmind.model_validation.sklearn.ClassifierPerformance:xgboost_champion",

"validmind.model_validation.sklearn.ConfusionMatrix:xgboost_champion",

"validmind.model_validation.sklearn.ROCCurve:xgboost_champion"

]Now, let's run and log our batch of model performance tests using our testing dataset (vm_test_ds) for our champion model:

- The test set serves as a proxy for real-world data, providing an unbiased estimate of model performance since it was not used during training or tuning.

- The test set also acts as protection against selection bias and model tweaking, giving a final, more unbiased checkpoint.

for test in mpt:

vm.tests.run_test(

test,

inputs={

"dataset": vm_test_ds, "model" : vm_xgboost,

},

).log()Run diagnostic tests

Next, we want to inspect the robustness and stability of our champion model. Use list_tests() to list all available diagnosis tests applicable to classification tasks:

vm.tests.list_tests(tags=["model_diagnosis"], task="classification")Let’s now assess the model for potential signs of overfitting and identify any sub-segments where performance may inconsistent.

Overfitting occurs when a model learns the training data too well, capturing not only the true pattern but noise and random fluctuations resulting in excellent performance on the training dataset but poor generalization to new, unseen data:

- Since the training dataset (

vm_train_ds) was used to fit the model, we use this set to establish a baseline performance for how well the model performs on data it has already seen. - The testing dataset (

vm_test_ds) was never seen during training, and here simulates real-world generalization, or how well the model performs on new, unseen data.

vm.tests.run_test(

test_id="validmind.model_validation.sklearn.OverfitDiagnosis:xgboost_champion",

input_grid={

"datasets": [[vm_train_ds,vm_test_ds]],

"model" : [vm_xgboost]

}

).log()Let's also conduct robustness and stability tests.

- Robustness evaluates the model’s ability to maintain consistent performance under varying input conditions.

- Stability assesses whether the model produces consistent outputs across different data subsets or over time.

Again, we'll use both the training and testing datasets to establish baseline performance and to simulate real-world generalization:

vm.tests.run_test(

test_id="validmind.model_validation.sklearn.RobustnessDiagnosis:xgboost_champion",

input_grid={

"datasets": [[vm_train_ds,vm_test_ds]],

"model" : [vm_xgboost]

},

).log()Run feature importance tests

We also want to verify the relative influence of different input features on our model's predictions. Use list_tests() to identify all the feature importance tests for classification and store them in FI:

# Store the feature importance tests

FI = vm.tests.list_tests(tags=["feature_importance"], task="classification",pretty=False)

FIWe'll only use our testing dataset (vm_test_ds) here, to provide a realistic, unseen sample that mimic future or production data, as the training dataset has already influenced our model during learning:

# Run and log our feature importance tests with the testing dataset

for test in FI:

vm.tests.run_test(

"".join((test,':xgboost_champion')),

inputs={

"dataset": vm_test_ds, "model": vm_xgboost

},

).log()In summary

In this notebook, you learned how to:

In a usual model validation workflow, you would wrap up your validation testing by verifying that all the tests provided by the model development team were run and reported accurately, and perhaps even propose a challenger model, comparing the performance of the challenger with the running champion.

- Specify all the tests you'd like to independently rerun, just like you did in the step Run data comparision tests

- Evaluate the performance of a challenger model against the champion, just like you did in the steps under Running model evaluation tests

Next steps

You can look at the output produced by the ValidMind Library right in the notebook where you ran the code, as you would expect. But there is a better way — use the ValidMind Platform to work with your validation report.

Work with your validation report

Now that you've logged all your test results and verified the work done by the model development team, head to the ValidMind Platform to wrap up your validation report:

From the Inventory in the ValidMind Platform, go to the model you connected to earlier.

In the left sidebar that appears for your model, click Validation under Documents.

Include your logged test results as evidence, create risk assessment notes, add artifacts, and assess compliance, then submit your report for review when it's ready. Learn more: Preparing validation reports

Discover more learning resources

For a more in-depth introduction to using the ValidMind Library for validation, check out our introductory validation series and the accompanying interactive training:

We offer many interactive notebooks to help you automate testing, documenting, validating, and more:

Or, visit our documentation to learn more about ValidMind.

Upgrade ValidMind

Retrieve the information for the currently installed version of ValidMind:

%pip show validmindIf the version returned is lower than the version indicated in our production open-source code, restart your notebook and run:

%pip install --upgrade validmindYou may need to restart your kernel after running the upgrade package for changes to be applied.

Copyright © 2023-2026 ValidMind Inc. All rights reserved.

Refer to LICENSE for details.

SPDX-License-Identifier: AGPL-3.0 AND ValidMind Commercial